Apache Hive Training | Master Data Warehousing on Hadoop

Become a Big Data Expert with Apache Hive — Instructor-led Live Online Sessions

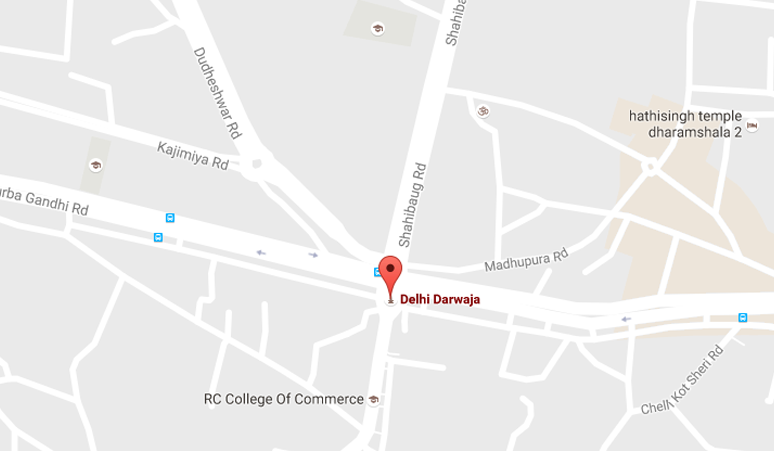

Apache Hive Training by Laliwala IT is designed for data engineers, analysts, and big data enthusiasts who want to master HiveQL and data warehousing on Hadoop. Based in Ahmedabad, Gujarat, India, we deliver live, interactive, project-based training covering everything from Hive fundamentals to advanced querying, partitioning, and performance tuning.

Our online Apache Hive course features real-time instructor-led classes, hands-on projects, flexible schedules, and career guidance. Whether you're a beginner or an experienced professional, this training will make you proficient in handling massive datasets using Hive.

Course Modules — Comprehensive Apache Hive Training (4-5 Weeks | 35+ Hours)

- Module 1: Big Data & Hive Fundamentals – Hive architecture, Hive vs RDBMS, data warehousing concepts, Hadoop ecosystem integration

- Module 2: Hive Installation & Configuration – Setting up Hive metastore, Derby vs MySQL metastore, HiveServer2, Beeline CLI

- Module 3: HiveQL Basics – Database & table creation, data types, loading data, INSERT, SELECT, WHERE clauses

- Module 4: Advanced HiveQL – JOINs (inner, outer, semi), subqueries, UNION, GROUP BY, HAVING, ORDER BY vs SORT BY

- Module 5: Table Partitioning & Bucketing – Static vs dynamic partitioning, bucketing benefits, partition pruning optimization

- Module 6: Hive Built-in Functions – String, date, math, aggregate functions, conditionals, type conversion functions

- Module 7: User Defined Functions (UDF) – Creating custom UDFs, UDAFs (aggregate), UDTFs (table-generating), Java integration

- Module 8: Hive File Formats & Compression – Text, SequenceFile, RCFile, ORC, Parquet; compression codecs (Snappy, Gzip, LZO)

- Module 9: Performance Optimization – Cost-based optimizer (CBO), vectorization, Tez execution engine, join optimizations

- Module 10: Hive with Other Tools – Hive + HBase integration, Hive + Spark, Hive Streaming, HCatalog, WebHCat

- Module 11: Security & Authorization – Authentication, authorization, Apache Ranger integration, storage based authorization

- Module 12: Real-World Capstone Project – Build ETL pipeline, process logs/clickstream data, generate analytics reports

What's Included in Apache Hive Training?

- Live Instructor-led classes (real-time Q&A, screen sharing, doubt clearing)

- Recorded sessions for revision anytime

- Hands-on assignments & industry-level big data projects

- Study materials (PDFs, Hive scripts, datasets)

- Certificate of completion (recognized by industry partners)

- Placement assistance – resume & interview prep, freelance guidance

- Lifetime access to course updates and alumni community

Detailed Curriculum Highlights

Week 1-2: Core Hive & HiveQL Mastery

- Introduction to Hadoop ecosystem and Hive's role

- Local Hadoop cluster setup (Cloudera/Hortonworks sandbox)

- Hive CLI, Beeline, and HiveServer2 configuration

- Creating managed vs external tables

- Loading data from HDFS (LOAD DATA, INSERT OVERWRITE)

- Complex data types: ARRAY, MAP, STRUCT, UNION

- Writing nested queries and common table expressions (CTE)

- Window functions: ROW_NUMBER, RANK, LEAD, LAG, OVER clause

Week 3-4: Advanced Optimization & Integration

- Partition strategies: choosing partition keys, avoiding small partitions

- Bucketing – evenly distributing data, sampling optimization

- ORC & Parquet columnar formats – performance benchmarks

- Writing custom UDFs in Java and Python (Hive Streaming)

- Cost-based optimizer (CBO) and statistics gathering

- Vectorized query execution and Tez vs MR engine comparison

- Hive on Spark – configuration, memory management

- Integration with HBase – Hive-HBase table mapping

Week 5: Enterprise Hive & Capstone Project

- HCatalog – shared schema across Pig, MapReduce, Spark

- WebHCat REST API – submitting Hive jobs remotely

- Apache Ranger integration for fine-grained access control

- ACID properties – transactions, update/delete in Hive

- Streaming ingestion with Kafka + Hive Streaming API

- Performance tuning: map/reduce join optimizations, skew joins

- Monitoring Hive queries – Tez UI, Hive logs, explain plans

- Capstone: build end-to-end data warehouse for e-commerce analytics

Tools & Technologies Covered

- Apache Hive 3.x, HiveQL, Hadoop HDFS, YARN

- Execution Engines: MapReduce, Tez, Spark

- Metastore: MySQL, PostgreSQL, Derby

- File Formats: ORC, Parquet, Avro, SequenceFile

- Compression: Snappy, Gzip, LZO, Zstandard

- Integration: HBase, Spark SQL, Kafka, Ranger, Atlas

- Development: Eclipse, IntelliJ, Python, Java

Why Choose Laliwala IT for Apache Hive Online Training?

- Industry Expert Trainers: 10+ years of Big Data & Hadoop experience

- Live Project Experience: Build at least 3 real-world ETL pipelines + final portfolio

- Flexible Batches: Weekday & weekend options, recorded backup for missed classes

- Small Batch Size: Max 10-12 students for personalized attention

- Affordable Fees: High-quality training at competitive rates from Ahmedabad hub

- Job Assistance: Regular tie-ups with IT companies & placement cell

- Certification: ISO & Govt recognized certificate after successful completion

- 24/7 Lab Access: Online Hadoop clusters & learning management system

- Global Recognition: Trained students from India, USA, UK, Canada, Australia, UAE

- Post-training Support: Doubt clearing via dedicated forum & email for 6 months

Who Should Join?

- Data Analysts wanting to process big data using SQL-like queries

- ETL Developers moving to Big Data platforms

- Database Administrators learning data warehousing on Hadoop

- Software Engineers interested in Big Data ecosystem

- Data Scientists requiring efficient data processing

- College students seeking job-ready Big Data skills

- Working professionals aiming for Hadoop/Hive certification

Online Training

WordPress Development

Liferay System Administration

Liferay Theme Development

Liferay Portal Administrator

Liferay Development

Liferay Training Course

Apache Hive

Apache Pig

Apache Solr

Apache Cassandra

Apache CMIS

Apache Hadoop

Apache ActiveMQ

Advanced Apache Mahout

Apache Camel

Apache Maven

Apache Nutch

Apache Mahout

Magento

AWS Cloud Computing

Alfresco Share Configuration

Alfresco

Alfresco Activiti

Moodle

Drupal

Joomla

Advanced Activiti BPM

JBoss jBPM

Git

Puppet

Mule ESB

Apache CXF

Apache HBase

OpenStack Cloud Computing

Cloud Security

Automation Testing

Apache HTTP Server Administration

Development Services

© 2025 Laliwala IT. All rights reserved.