Apache Nutch | Web Crawler & Search Engine Course

Master Web Crawling & Search Indexing — Live Instructor-led Apache Nutch Training

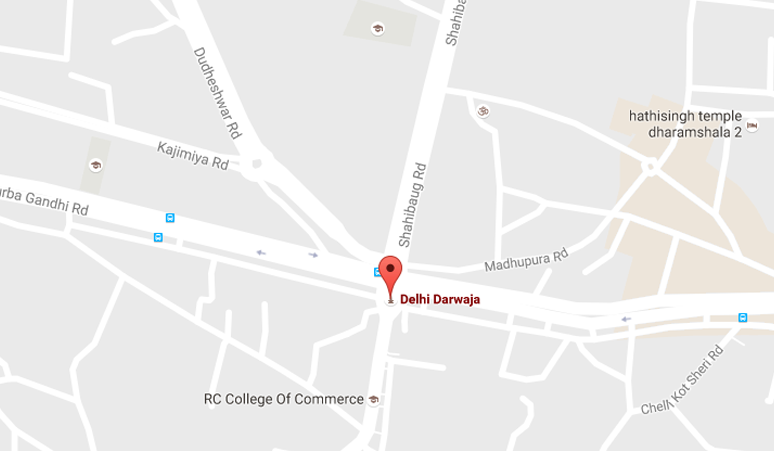

Apache Nutch Course by Laliwala IT is designed for data engineers, search specialists, and IT professionals who want to master web crawling, scraping, search engine indexing, and big data processing. Based in Ahmedabad, Gujarat, India, we deliver live, interactive, project-based training covering everything from Nutch fundamentals to advanced plugin development, Solr/Elasticsearch integration, and distributed crawling.

Our online Apache Nutch course features real-time instructor-led classes, hands-on crawling projects, flexible schedules, and career guidance. Whether you're a beginner or looking to upgrade your search engineering skills, this training will turn you into a job-ready Web Crawling Specialist.

Course Modules — Comprehensive Apache Nutch Training (5-6 Weeks | 40+ Hours)

- Module 1: Introduction to Web Crawling & Apache Nutch – Crawler architecture, Nutch components, Use cases, Search engine basics

- Module 2: Nutch Setup & Configuration – Installation, Environment setup, Configuration files (nutch-site.xml, regex-urlfilter.txt)

- Module 3: Crawling Fundamentals – Inject, Generate, Fetch, Parse, Updatedb, Segment management

- Module 4: URL Filtering & Normalization – Regex filters, URL normalizers, Scope management, Robots.txt handling

- Module 5: Parsing & Content Extraction – Parse plugins, HTML/XPath extraction, Metadata, Language detection

- Module 6: Indexing with Apache Solr – Solr setup, Schema configuration, Indexing pipelines, Deduplication

- Module 7: Indexing with Elasticsearch – Elasticsearch integration, Mapping, Bulk indexing, Search queries

- Module 8: Nutch Plugin Architecture – Plugin system, Custom parse plugins, Indexing plugins, Protocol plugins

- Module 9: Advanced Crawling Strategies – Deep web crawling, Politeness policies, Throttling, Crawl delay

- Module 10: Distributed Crawling with Apache Hadoop – Nutch on HDFS, MapReduce jobs, Scaling across nodes

- Module 11: Crawl Monitoring & Management – CrawlDB inspection, Link inversion, Scoring, Scoring filters

- Module 12: Real-World Capstone Project – Build vertical search engine for e-commerce/news domain

What's Included in Apache Nutch Training?

- Live Instructor-led classes (real-time Q&A, screen sharing, doubt clearing)

- Recorded sessions for revision anytime

- Hands-on assignments & industry-level crawling projects

- Study materials (PDFs, configuration templates, code repositories)

- Certificate of completion (recognized by industry partners)

- Placement assistance – resume & interview prep, search engineer guidance

- Lifetime access to course updates and student community

Detailed Curriculum Highlights

Week 1-2: Nutch Fundamentals & Crawling Lifecycle

- Understanding web crawling challenges: politeness, scale, freshness

- Installing Nutch from source and binary distributions

- Configuring nutch-site.xml, crawl-urlfilter.txt, regex-normalize.xml

- Injecting seed URLs into CrawlDB

- Generate, Fetch, Parse, Updatedb cycle explained

- Understanding segments and their structure

- Running complete crawl using bin/crawl script

- Inspecting CrawlDB, LinkDB, and segments with Nutch tools

Week 3-4: Filtering, Parsing & Indexing

- Regular expression URL filtering: include/exclude patterns

- URL normalization: removing duplicate parameters, trailing slashes

- Robots.txt compliance and politeness configuration

- Parsing HTML, XML, PDF, and other document types

- XPath and CSS selector extraction using parse plugins

- Configuring Apache Solr: schemaless mode, managed schema

- Indexing into Solr: index, dedup, solrindex commands

- Elasticsearch integration: nutch-elasticsearch plugin setup

Week 5: Plugin Development & Distributed Crawling

- Nutch plugin architecture: extension points, plugin.xml

- Writing custom ParsePlugin for structured data extraction

- Custom IndexingPlugin to add custom fields to Solr/ES

- Protocol plugin for authentication or custom protocols

- Distributed crawling with Apache Hadoop (HDFS integration)

- Configuring Nutch to run on YARN cluster

- Scoring plugins: OPIC scoring, custom scoring logic

- De-duplication, URL filtering best practices for large crawls

Week 6: Advanced Crawling & Capstone Project

- Deep web crawling: handling forms, JavaScript basics (intro)

- Focus crawling and topic-specific crawling strategies

- Incremental crawling, refresh, and recrawl strategies

- Monitoring crawls: logs, metrics, performance tuning

- Real-world project: Build job search engine crawler

- Project: News aggregator with Solr-based search frontend

- Code review, optimization, and presentation for recruiters

Why Choose Laliwala IT for Apache Nutch Online Training?

- Industry Expert Trainers: 10+ years of search & big data experience

- Live Project Experience: Build real-world search engines

- Flexible Batches: Weekday & weekend options, recorded backup

- Small Batch Size: Max 10-12 students for personalized attention

- Affordable Fees: High-quality training at competitive rates from Ahmedabad hub

- Job Assistance: Regular tie-ups with search & data-focused IT companies

- Certification: ISO & Govt recognized certificate after successful completion

- 24/7 Lab Access: Online practice servers & learning management system

- Global Recognition: Trained students from India, USA, UK, Canada, Australia, UAE

- Post-training Support: Doubt clearing via dedicated forum & email for 6 months

Tools & Technologies Covered

- Apache Nutch 1.x/2.x, Apache Solr 8/9, Elasticsearch 7/8, Apache Hadoop

- Java, Linux/Unix commands, Bash scripting

- Regular Expressions, XPath, CSS Selectors

- Plugin Development: Java, XML configuration

- Build Tools: Apache Ant, Maven, Gradle

- Search Frontend: Solr UI, Elasticsearch Kibana, Custom Web UI basics

Who Should Join?

- Data engineers wanting to learn web crawling at scale

- Search engineers building custom search solutions

- Big data developers working with Hadoop ecosystem

- Fresh graduates aiming for data acquisition careers

- IT professionals building vertical search engines

- E-commerce teams implementing product data crawling

- Research organizations requiring web data collection

Online Training

WordPress Development

Liferay System Administration

Liferay Theme Development

Liferay Portal Administrator

Liferay Development

Liferay Training Course

Apache Hive

Apache Pig

Apache Solr

Apache Cassandra

Apache CMIS

Apache Hadoop

Apache ActiveMQ

Advanced Apache Mahout

Apache Camel

Apache Maven

Apache Nutch

Apache Mahout

Magento

AWS Cloud Computing

Alfresco Share Configuration

Alfresco

Alfresco Activiti

Moodle

Drupal

Joomla

Advanced Activiti BPM

JBoss jBPM

Git

Puppet

Mule ESB

Apache CXF

Apache HBase

OpenStack Cloud Computing

Cloud Security

Automation Testing

Apache HTTP Server Administration

Development Services

© 2025 Laliwala IT. All rights reserved.